December 2025 has been a landmark month for artificial intelligence, with major model releases, strategic industry partnerships, and significant policy developments reshaping the AI landscape. From Anthropic’s groundbreaking Claude Opus 4.5 to Google’s Gemini 3 and the US government’s Genesis Mission, this month has set the stage for AI’s evolution in 2026. Join us as we explore the most significant AI news and analyze what these developments mean for businesses, researchers, and society.

Major AI Model Releases and Breakthroughs

Claude Opus 4.5 has set new benchmarks in AI performance, particularly in software engineering tasks

Claude Opus 4.5: The First AI to Outperform Human Engineers

Anthropic’s Claude Opus 4.5, released in late November but dominating December discussions, represents a significant leap in AI capabilities. This model is the first to surpass the 80% threshold on the SWE-bench Verified benchmark (80.9%), outperforming competitors like Google Gemini 3 Pro (76.2%) and OpenAI GPT-5.1 (77.9%).

What makes Claude Opus 4.5 truly revolutionary is its performance in Anthropic’s internal engineering tests, where it outscored human candidates for engineering positions. The model excels in tool usage (98.1% on MCP Atlas) and computer interaction (66.3% on OSWorld), with remarkable abilities to:

- Autonomously fix bugs in GitHub repositories

- Coordinate multiple AI agents for complex tasks

- Perform long-term planning and self-improvement

- Integrate with browsers, terminals, and office applications

Anthropic has also made the model three times cheaper to use ($5 per million input tokens) and improved safety against prompt injections. A new “effort” parameter allows users to balance speed and depth of analysis, making it ideal for enterprise automation.

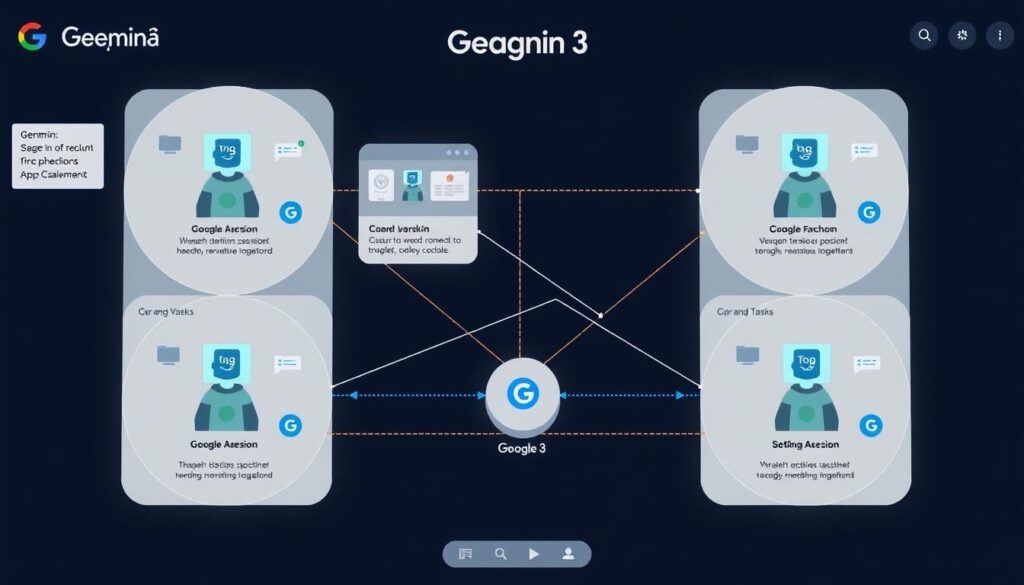

Gemini 3’s multi-agent capabilities enable complex workflows and reasoning

Google Unveils Gemini 3: Advanced Multi-Agent Capabilities

Google has answered Anthropic’s challenge with Gemini 3, its next-generation frontier model family. Positioned as its “most intelligent” system yet, Gemini 3 builds on previous versions by integrating native multimodality, long context handling, and agentic capabilities into a unified, multi-agent stack.

Key features of Gemini 3 include:

- A 1M token context window for processing extensive information

- State-of-the-art results on reasoning benchmarks like Humanity’s Last Exam and GPQA Diamond

- A dedicated “Deep Think” mode for intensive reasoning tasks

- Google Antigravity for developer workflows where agents can autonomously operate editors, terminals, and browsers

- Gemini Agent integration with Gmail, Calendar, and browsers for multi-step task execution

Perhaps most significantly, Gemini 3 underpins new “generative interfaces” in Google Search and the Gemini app, where the model renders dynamic visual layouts or custom UIs on demand. This tight integration into Chrome, Android, and the broader Google ecosystem positions Gemini as less of a standalone chatbot and more of an operating-system primitive for reasoning and orchestration.

Grok 4.1 focuses on reasoning, code generation, and real-time web integration

xAI Releases Grok 4.1 with Enhanced Reasoning

Not to be outdone, xAI has released Grok 4.1 as its new flagship model. While xAI did not publish a full technical report, its blog and benchmark tables showed Grok 4.1 closing much of the remaining gap with GPT-5-class systems on math and coding benchmarks.

The model is positioned as a multi-modal system with stronger reasoning, code generation, and real-time web integration than its predecessors. In practice, the most interesting aspect is not just improved benchmark scores but the move toward agents that blend search, tools, and messaging into a single environment.

Download Our Comprehensive AI Models Comparison Report

Get detailed benchmarks and real-world performance analysis of Claude Opus 4.5, Gemini 3, and Grok 4.1 in our exclusive report.

Advances in AI Image Generation

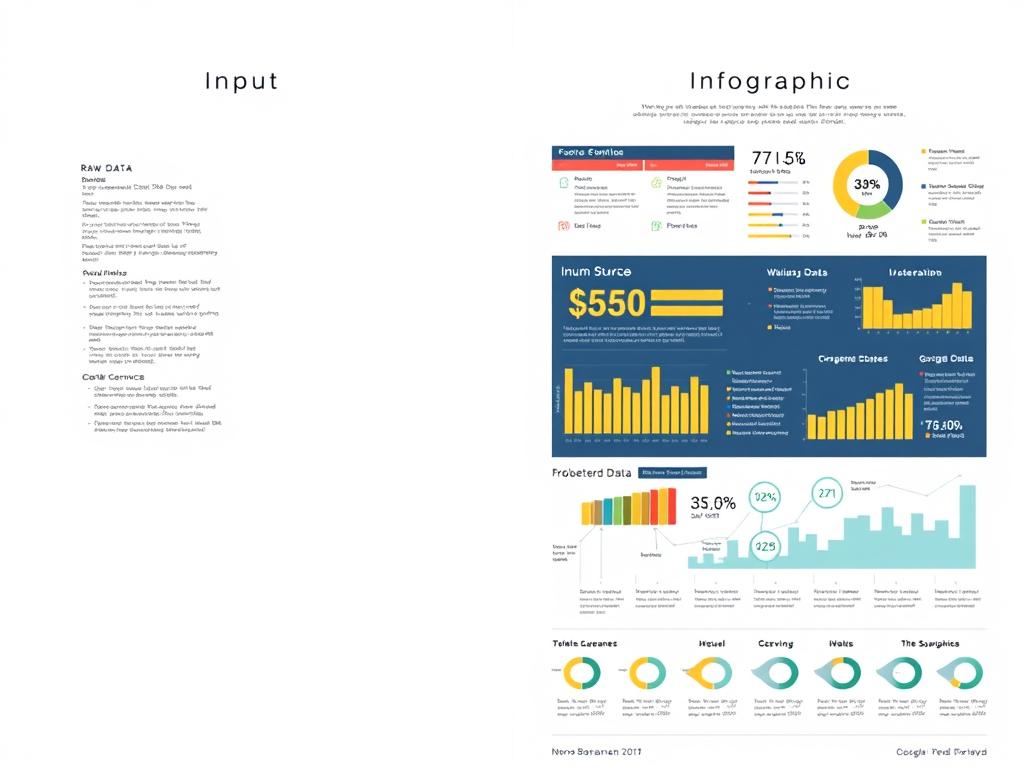

Nano Banana Pro excels at converting raw data into visually compelling infographics

Google’s Nano Banana Pro Revolutionizes Data Visualization

Google DeepMind has released Nano Banana Pro, positioned as the image layer of Gemini 3 Pro. This new image generation and editing model uses Gemini’s reasoning and real-world grounding to produce more accurate, context-rich visuals, with support for up to 14 input images and consistent rendering of up to five people in a scene.

What sets Nano Banana Pro apart is its optimization for legible, correctly rendered text directly in images, including multilingual layouts. The model excels at turning structured or unstructured inputs—spreadsheets, notes, recipes, weather data—into infographics, diagrams, and other data visualization outputs, making it particularly valuable for business and educational contexts.

FLUX.2 enables sophisticated multi-reference image composition with high-quality outputs

FLUX.2: Open-Weight Image Generation with 4MP Outputs

German frontier visual AI company Black Forest Lab (BFL) has launched FLUX.2, a family of image generation and editing models capable of 4-megapixel outputs with up to 10 reference images, multi-reference composition, and significantly improved text rendering.

The company has released a full set of hosted models (Pro and Flex) and a 32B-parameter open-weight Dev checkpoint. FLUX.2 Dev supports 4MP editing, multi-reference conditioning, and 32K-token prompts, while the accompanying open-source VAE is licensed under Apache 2.0, enabling enterprises to integrate FLUX.2 into self-hosted workflows without vendor lock-in.

Importantly, the model’s quality (as judged by humans) per cost is unmatched, making it particularly useful for real-world image generation and editing workflows in commercial settings.

Strategic Industry Partnerships and Infrastructure

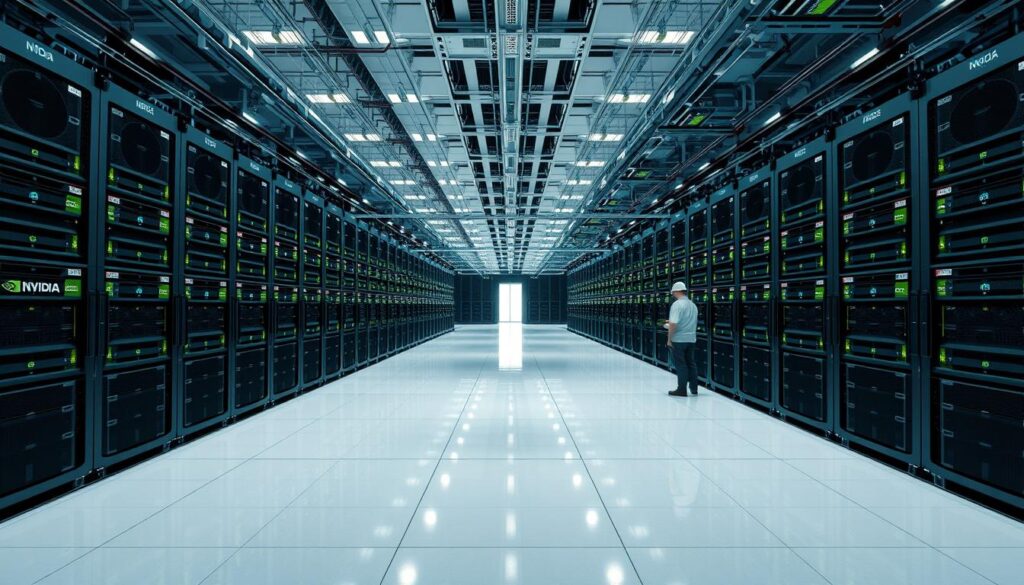

Microsoft and NVIDIA’s 1GW supercomputer cluster represents one of the largest AI infrastructure investments to date

Microsoft and NVIDIA’s $15B Investment in Anthropic

In a major industry development, Microsoft and NVIDIA have deepened their infrastructure partnership by agreeing to provide Anthropic with a 1 GW supercomputer cluster, powered by tens of thousands of NVIDIA GB300 GPUs. This deal will see Microsoft and NVIDIA invest up to $15 billion to support Anthropic’s training roadmap.

This partnership represents a significant shift in industry dynamics. Despite previous tensions between Anthropic and NVIDIA—notably when Anthropic’s Dario Amodei advocated for US government bans on exporting NVIDIA’s best chips to China—the companies have set aside differences to ensure AI delivers for all parties involved.

OpenAI’s AWS partnership provides critical infrastructure redundancy beyond its Azure footprint

OpenAI Signs $38B Deal with Amazon Web Services

OpenAI has secured a new seven-year deal with Amazon Web Services, reported at around $38 billion of contracted spend on AWS infrastructure. This agreement gives OpenAI access to Amazon’s high-density EC2 UltraServers and a substantial allocation of NVIDIA accelerators as a complement to its existing Azure footprint.

This move is less about “multi-cloud” fashion and more about survivability: no single provider can credibly guarantee the power, chips, and land needed for GPT-class training runs over the rest of the decade. By diversifying its infrastructure partners, OpenAI is ensuring it can maintain its ambitious development roadmap regardless of potential supply chain or capacity constraints.

The Compute Arms Race Intensifies

NVIDIA’s latest quarterly earnings underscore the acceleration of the AI compute arms race. For the three months to October 26, NVIDIA reported $57.0 billion in revenue, up 22% QoQ and 62% YoY, with data center revenue at $51.2 billion (at a gross margin of 73%), up 25% sequentially and 66% YoY.

While some commentators pointed to NVIDIA’s rapidly rising inventories as a bearish signal, analysis suggests that the 32% QoQ rise in inventories is driven almost entirely by raw materials and work-in-process, while finished goods inventory has collapsed. This inventory shift reflects accelerating server build-outs to meet hyperscaler roadmaps, not softening demand.

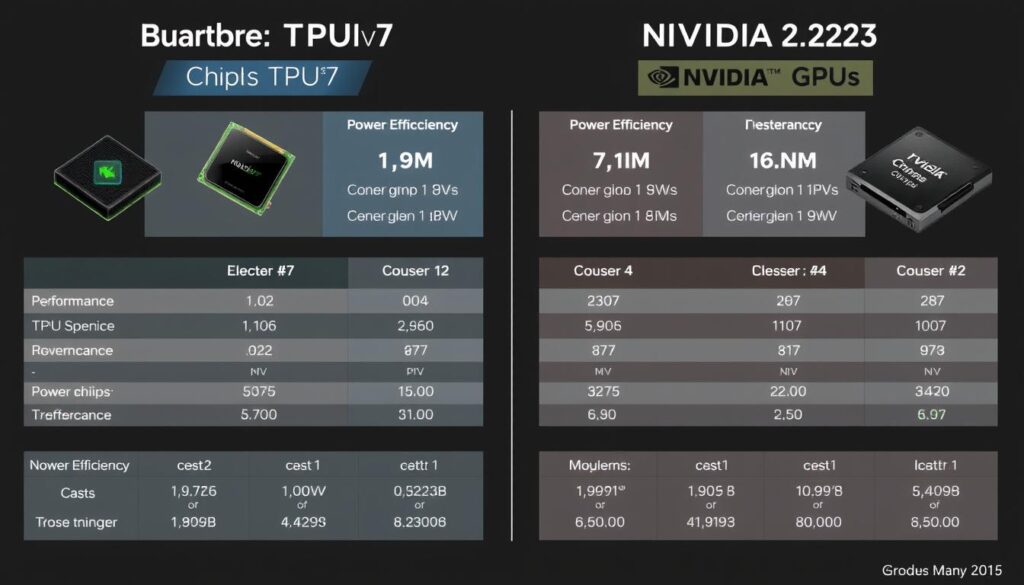

Google’s TPUv7 program is emerging as a serious competitor to NVIDIA’s GPU dominance

Google’s TPUv7 Challenges NVIDIA’s Dominance

A major dynamic beneath the Gemini and Opus 4.5 announcements comes from the economics of custom silicon. Google’s TPUv7 program is reaching commercial viability at a scale that could reshape cost curves for AI compute.

Anthropic’s TPU order reportedly exceeded 1GW, comprising at least 1 million chips split between 400,000 “Ironwood” units bought outright for roughly $10 billion and 600,000 rented via Google Cloud under a deal estimated at $42 billion. Industry analysts note that OpenAI, by merely signaling interest in TPUs during procurement negotiations, secured roughly 30% savings on its NVIDIA GPU fleet.

Meta, SSI, xAI, and other labs are evaluating large-scale TPU acquisitions as leverage against GPU pricing. The greater the TPU volumes Google sells, the more GPU capex its rivals avoid, suggesting Google could evolve into a de facto merchant silicon vendor and intensify the GPU–TPU pricing contest.

AI Policy and Geopolitical Developments

The Genesis Mission treats AI compute as a strategic industrial asset linked to energy and national security

The White House Launches Genesis Mission

The White House has formally launched the Genesis Mission, a federal initiative that treats AI compute as a strategic industrial asset inseparable from US energy and national security policy. Genesis frames AI data centers as “energy-hungry factories of intelligence” and lays out a plan to co-locate large-scale training clusters with new nuclear and renewable generation, rather than drawing ever more power from already stressed regional grids.

The Department of Energy’s program outlines a mix of public and private projects:

- Support for advanced reactor deployments sited directly alongside AI facilities

- Incentives for hyperscalers to procure firm low-carbon electricity

- Long-range planning premised on AI’s power demand rising by tens of gigawatts over the next decade

Genesis is also a data-mobilization project designed to unlock the federal government’s vast scientific corpus for AI training and automated discovery. The initiative directs the Department of Energy to build a national “American Science and Security Platform” that integrates decades of experimental data, federally curated scientific datasets, instrumentation outputs, and synthetic data pipelines—much of it previously siloed or inaccessible.

Export controls are creating a bifurcated global AI hardware ecosystem

US Tightens Export Controls on AI Chips to China

Washington has tightened export controls on NVIDIA’s China-specific B30A accelerators, blocking their sale after intelligence agencies concluded that even scaled-down versions could train frontier-class models when deployed in large clusters. NVIDIA has effectively written China out of its data center guidance and is redesigning yet another generation of export-compliant chips.

In response, Beijing has quietly issued guidance that any data center project receiving state funding must use only domestically produced AI chips. Chinese regulators ordered state-backed facilities less than 30% complete to remove installed foreign semiconductors or cancel planned purchases, effectively banning NVIDIA, AMD, and Intel accelerators from a large slice of the country’s future AI infrastructure.

The result is a de facto bifurcation of the AI hardware world:

Western AI Hardware Ecosystem

- NVIDIA remains the default provider

- AMD provides competitive pressure

- Google’s TPUs gaining market share

- Open collaboration between vendors

Chinese AI Hardware Ecosystem

- Huawei leads domestic production

- Cambricon and local startups growing

- Creative use of overseas data centers

- Risk of falling behind in absolute performance

Chinese firms like Alibaba and ByteDance are adapting by training models such as Qwen and Doubao on NVIDIA GPUs hosted in Singapore and Malaysia rather than onshore, creating a complex web of international AI development hubs.

Groundbreaking AI Research Papers

Kosmos AI Scientist can run for up to 12 hours performing iterative cycles of analysis and discovery

Kosmos: An AI Scientist for Autonomous Discovery

Edison Scientific, University of Oxford, and FutureHouse have introduced Kosmos, an AI scientist designed to automate data-driven discovery. Given an open-ended objective and dataset, it runs for up to 12 hours performing iterative cycles of parallel data analysis, literature search, and hypothesis generation.

A structured world model shares information between a data-analysis agent and a literature-search agent, enabling coherent pursuit of the objective across roughly 200 agent rollouts that collectively execute about 42,000 lines of code and read 1,500 papers per run. Kosmos cites all statements in its reports with code or primary literature, ensuring traceable reasoning.

Independent scientists found 79.4% of Kosmos’s statements accurate, and collaborators reported that a 20-cycle run equates to six months of their research time. The number of valuable findings scales linearly with cycles. By reproducing human discoveries across metabolomics, materials science, neuroscience, and genetics—and making novel contributions—Kosmos showcases the potential of structured multi-agent systems to accelerate scientific research.

DeepSeekMath-V2 achieved gold-level scores in the IMO 2025 and CMO 2024 competitions

DeepSeekMath-V2: Self-Verifiable Mathematical Reasoning

DeepSeek has introduced DeepSeekMath-V2, addressing the limitations of reinforcement learning methods that reward language models solely for correct final answers in math problems. The researchers propose training a verifier that can identify issues in natural-language proofs without reference solutions and using it as a reward model to train a proof generator.

By alternating between improving the verifier and using its feedback to refine the generator, they create a feedback loop where generation and verification reinforce each other. Built on DeepSeek-V3.2-Exp-Base, the resulting model achieves gold-level scores in the IMO 2025 and CMO 2024 competitions and solves 11 of 12 problems at Putnam 2024, scoring 118/120 and surpassing the highest human score.

These results demonstrate that self-verifiable mathematical reasoning is a promising direction for developing reliable automated theorem provers and highlight the value of coupling generation with strong verification.

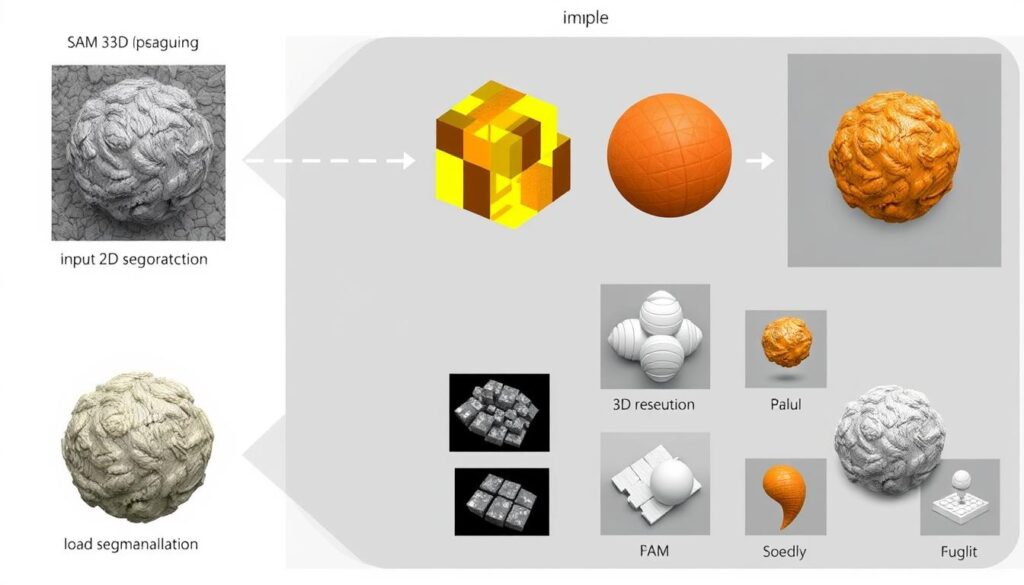

SAM 3D can generate detailed 3D models from a single image input

Meta Introduces SAM 3 and SAM 3D

Meta AI has released two significant updates to its Segment Anything Model:

SAM 3: Segment Anything with Concepts

SAM 3 extends Meta’s Segment Anything Model from segmentation of arbitrary objects to promptable concept segmentation. Given a concept prompt (a noun phrase or an exemplar image), the model must segment all instances of that concept across images or videos.

To support this, the authors constructed a dataset with four million unique concept labels and decoupled recognition from localization using a “presence head” that determines whether the concept exists. Their unified architecture doubles the accuracy of previous systems on concept segmentation tasks.

SAM 3D: 3Dfy Anything in Images

SAM 3D introduces a generative model that reconstructs 3D objects from a single image. The researchers combine a human- and model-in-the-loop annotation pipeline with multi-stage training: synthetic pre-training on rendered meshes, followed by real-world alignment.

The system uses the Segment Anything framework to isolate objects and then generates 3D shapes via a diffusion model conditioned on the 2D input. Evaluations show a 5:1 preference in human studies over prior methods. This work advances single-view 3D generation by leveraging segmentation models and bridging synthetic and real-world data, suggesting how generative AI can power AR/VR content creation.

Join Our AI Research Webinar Series

Dive deeper into these groundbreaking research papers with our expert-led webinar series, featuring researchers from leading AI labs.

AI Business and Investment Landscape

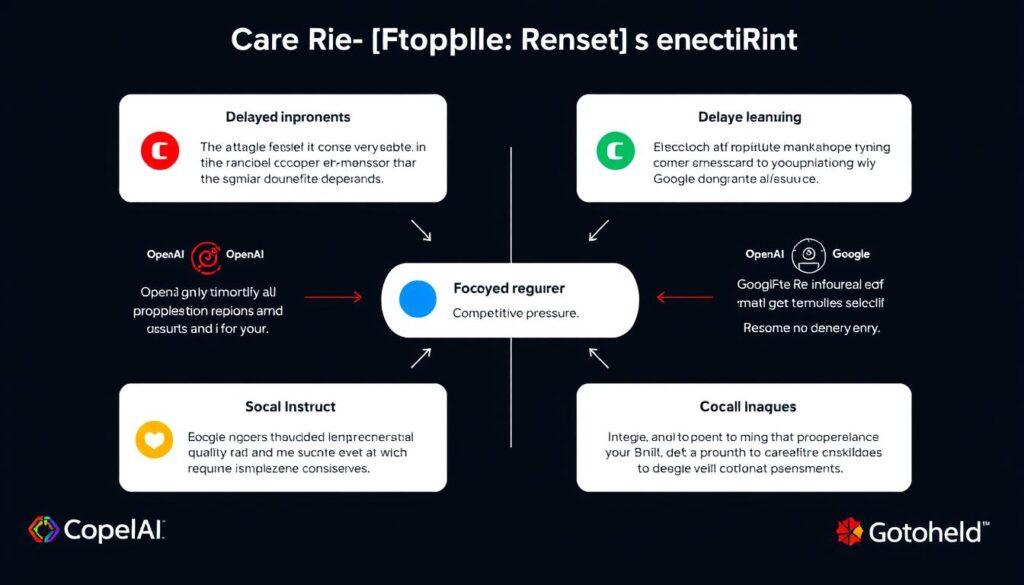

OpenAI’s “Code Red” signals a strategic pivot to focus on core ChatGPT quality

OpenAI Declares “Code Red” as Google Gemini Closes the Gap

OpenAI CEO Sam Altman has declared an internal “code red” at the company, telling employees that all efforts must now focus on improving ChatGPT quality. The emergency pivot comes after Google’s latest Gemini models showed they’re catching up to—and in some benchmarks surpassing—OpenAI’s offerings.

As part of this competitive response, OpenAI is reportedly developing a new AI model codenamed “Garlic” that the company claims tested ahead of Google’s flagship Gemini 3 model. The company is also prioritizing its Imagegen image generation model for ChatGPT users.

Altman’s memo indicated that other product launches will be delayed as the company prioritizes personalization features, speed improvements, and reliability for its flagship chatbot. Planned initiatives being pushed back include:

- Advertising integration

- AI agents for health and shopping

- A personal assistant feature called Pulse

The announcement signals growing concern that OpenAI’s first-mover advantage in consumer AI may be eroding as competition intensifies.

Anthropic’s potential IPO could be one of the largest in history at a valuation of $350 billion

Anthropic Eyes Historic IPO in Race Against OpenAI

The AI company behind Claude has engaged top-tier law firm Wilson Sonsini and major banks for a potential 2026 public listing, with recent valuations reaching $350 billion after massive investments from Microsoft and NVIDIA.

Anthropic is making a bold power play while OpenAI is distracted by competitive pressure. Going public first could give them a huge advantage in the AI arms race—public markets mean more capital, more credibility, and the ability to attract top talent with stock options.

Notable AI Funding Rounds

| Company | Funding | Valuation | Focus Area | Lead Investors |

| Profluent | $106M | Undisclosed | AI for biology | Jeff Bezos, Altimeter Capital |

| Metropolis | $500M Series D | $5B | AI-driven parking automation | LionTree, Eldridge Industries |

| Suno | $250M Series C | $2.45B | AI music generation | Menlo Ventures, NVentures |

| Gamma | $68M Series B | $2.1B | AI presentation generation | Andreessen Horowitz |

| Wonderful | $100M Series A | $700M | AI customer interaction platform | Index Ventures |

The UN warns that AI could reverse decades of economic progress for developing countries

UN Warns: AI Could Widen Global Inequality

A new report from the UN Development Programme titled “The Next Great Divergence” warns that AI could reverse decades of economic progress for developing countries. The report, presented in Geneva, argues that wealthy nations are racing ahead on AI infrastructure, talent, and data, while many developing countries lack basic digital capacity.

As AI becomes integrated into everything from finance to healthcare, countries without access to these tools risk being left further behind. The report calls for international cooperation and investment to prevent a new “AI divide” that could exacerbate existing global inequalities.

Emerging AI Trends for 2026

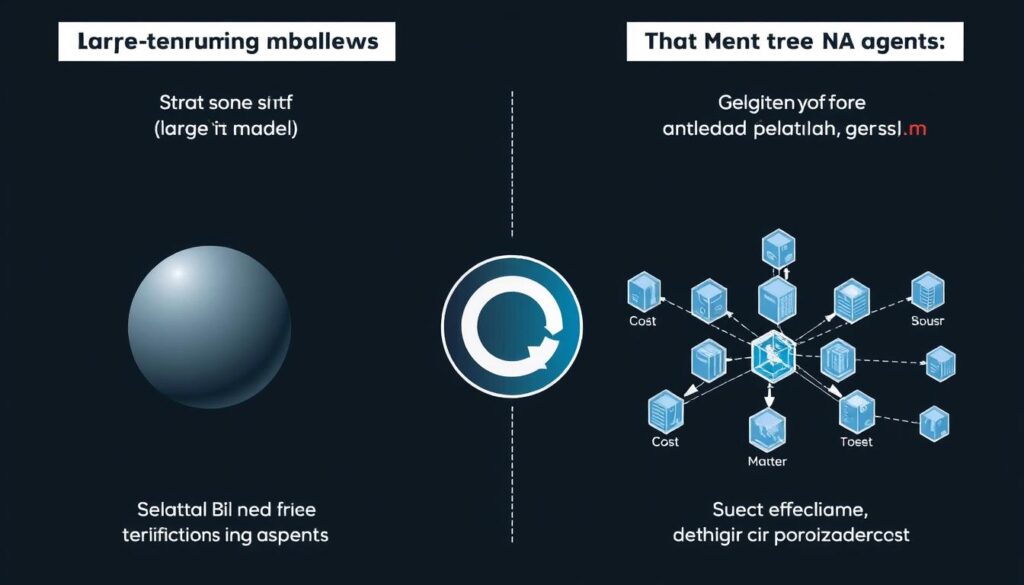

The industry is shifting toward smaller, more specialized AI agents for specific tasks

The Rise of Smaller, Specialized AI Agents

Industry insiders predict a significant transition away from large-scale, resource-intensive models such as ChatGPT. Instead, the trend is moving toward the development and deployment of smaller, more narrowly focused AI agents. These specialized systems are expected to be more cost-effective and deliver greater efficiency when applied to specific, well-defined tasks.

This evolving direction represents a notable change in how artificial intelligence technologies are created and utilized, with a new emphasis on targeted functionality and affordability. As a result, the overall strategy for AI development is likely to shift toward these streamlined models, marking a departure from the reliance on massive, general-purpose AI systems.

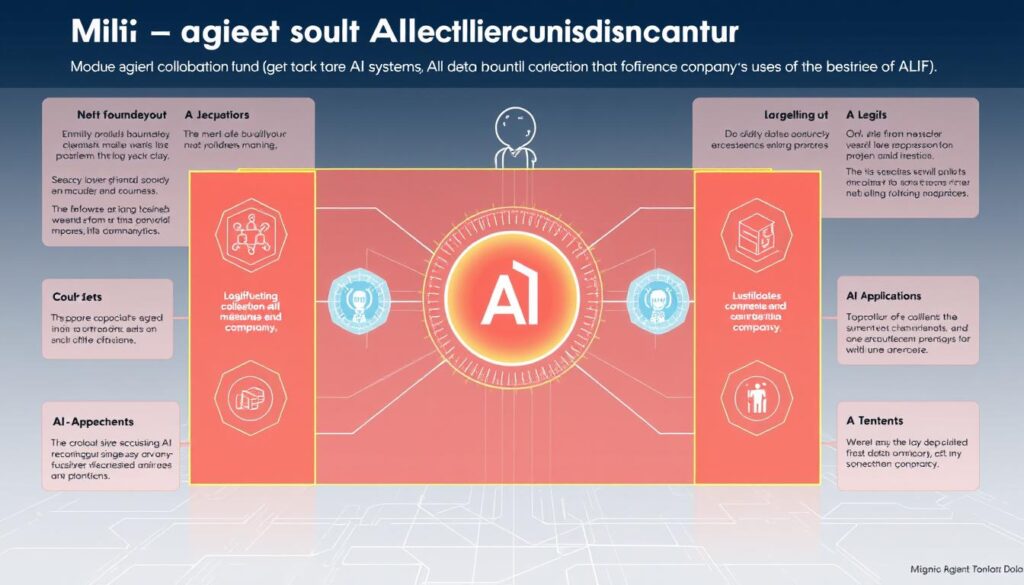

Fujitsu’s technology enables secure collaboration between AI agents from different companies

Multi-Agent Collaboration Technologies

Fujitsu has unveiled a new technology designed to enable secure collaboration between multiple artificial intelligence (AI) agents that are developed and operated by different companies. This innovative approach allows the AI agents to work together effectively without the need to share or expose any of their confidential or proprietary data.

The main goal is to maintain data privacy and security while still enabling cooperative problem-solving between organizations. The initial phase of testing this technology is scheduled to begin in January 2026, in partnership with Rohto Pharmaceutical, focusing on improving and streamlining supply chain operations.

Agentic workloads now account for over 30% of enterprise AI usage

Enterprise Adoption of Agentic AI Accelerates

According to Anthropic’s case studies, the share of “agentic” workloads—tasks where AI models call tools, write files, or drive external systems—now exceeds 30% of enterprise usage. This indicates that the marginal value of frontier models is shifting from pure text quality toward action and orchestration.

This trend is reflected in recent product launches from major AI companies:

- Google’s Antigravity for developer workflows where agents autonomously operate editors and terminals

- Anthropic’s integration of Claude with browsers and office applications

- OpenAI’s focus on tool use and API integrations

As enterprises become more comfortable with AI systems taking autonomous actions, we can expect to see further growth in agentic applications throughout 2026, particularly in areas like document processing, customer service, and software development.

AI power demand is projected to rise by tens of gigawatts over the next decade

Energy and Sustainability Challenges

As AI models continue to grow in size and complexity, energy consumption has become a critical concern. The US Department of Energy’s Genesis program highlights the expectation that AI’s power demand will rise by tens of gigawatts over the next decade.

This challenge is driving several emerging trends:

- Co-location of AI data centers with nuclear and renewable energy sources

- Development of more energy-efficient hardware like Google’s TPUs

- Research into “Intelligence per Watt” optimization

- Growing focus on smaller, more efficient models for edge deployment

As we move into 2026, expect to see more AI companies publishing energy efficiency metrics alongside traditional performance benchmarks, and more partnerships between AI labs and clean energy providers.

The AI Landscape Heading into 2026

The AI competitive landscape continues to evolve rapidly as we head into 2026

As we close out December 2025, the AI landscape is characterized by intensifying competition, massive infrastructure investments, and a shift toward more capable, agentic systems. The “big three” of OpenAI, Google, and Anthropic continue to dominate the frontier model space, but the competitive dynamics are shifting rapidly.

Google’s Gemini 3 and Anthropic’s Claude Opus 4.5 have demonstrated that the gap between leading models is narrowing, forcing OpenAI into a defensive posture with its “code red” response. Meanwhile, the bifurcation of the global AI ecosystem between Western and Chinese technology stacks continues to accelerate, with significant implications for global AI development.

Looking ahead to 2026, we can expect:

- Continued evolution toward smaller, specialized AI agents working in concert

- Greater focus on energy efficiency and sustainable AI infrastructure

- Increased enterprise adoption of agentic AI for automation

- More sophisticated multi-modal capabilities across text, image, and video

- Growing policy attention to AI’s economic and social impacts

The developments of December 2025 have set the stage for what promises to be another transformative year in artificial intelligence. As models continue to surpass human capabilities in specific domains like software engineering, the focus is shifting from “can AI do this?” to “how do we responsibly integrate these capabilities into our businesses and society?”

Stay Updated on AI Developments

Subscribe to our newsletter for monthly insights on the latest AI breakthroughs, industry developments, and expert analysis.

Comments are closed