The artificial intelligence landscape continues to evolve at a breathtaking pace. This week brings particularly significant developments across multiple fronts—from enhanced language models and enterprise AI solutions to regulatory frameworks and creative tools. We’ve curated the ten most impactful AI stories that showcase how rapidly the technology is transforming business, governance, and creative expression. Let’s dive into the innovations that defined the second week of November 2025.

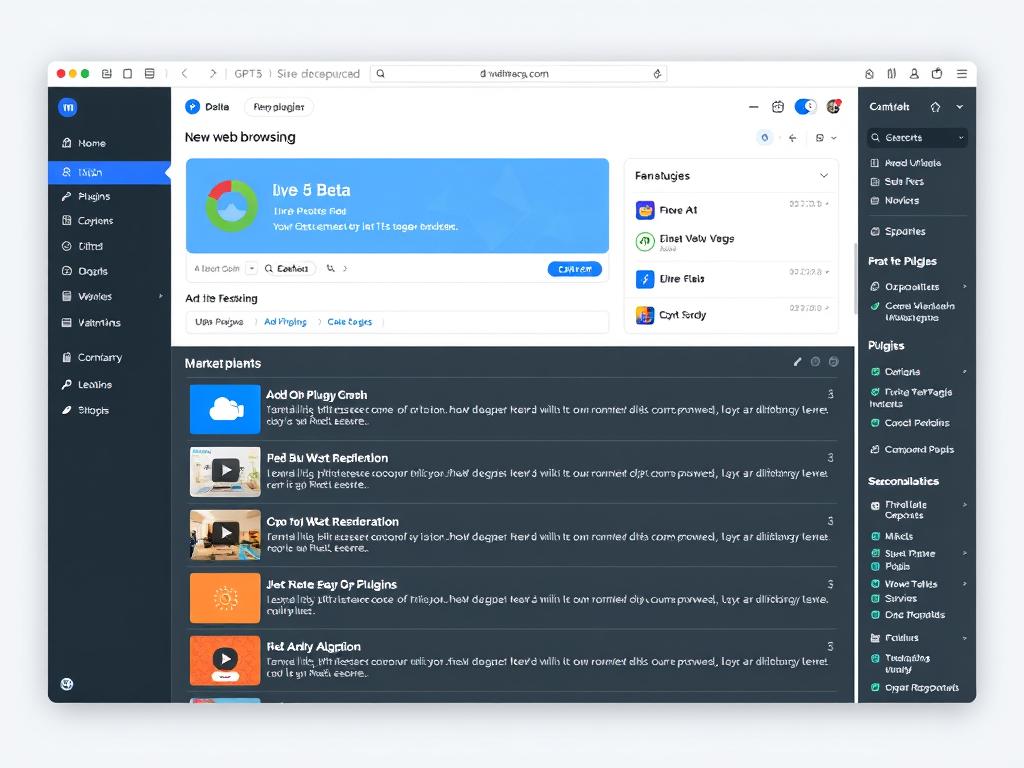

1️⃣ GPT-5 Beta Gets Live Web Browsing + Plugin Marketplace

OpenAI has significantly expanded GPT-5’s capabilities with the introduction of live web browsing and a comprehensive plugin marketplace in its latest beta release. Unlike previous iterations that relied on outdated training data, GPT-5 can now access and analyze real-time information from across the internet, enabling it to provide current news, market data, and research findings.

The live browsing feature allows GPT-5 to navigate websites, extract relevant information, and even interact with web applications while maintaining context throughout the session. This represents a major leap forward in AI assistants’ ability to serve as real-time research partners rather than static knowledge repositories.

Complementing this functionality, the new plugin marketplace offers a centralized ecosystem where developers can publish tools that extend GPT-5’s capabilities. The marketplace already features over 200 plugins across categories including data analysis, creative tools, productivity enhancements, and specialized research assistants.

Industry analysts note that this expansion addresses one of the most significant limitations of large language models—their inability to access current information—while creating new opportunities for developers to build and monetize AI-powered tools.

“With live web browsing and the plugin ecosystem, GPT-5 transitions from being primarily a text generator to becoming a genuine cognitive assistant capable of real-time problem-solving,” says Dr. Elena Markov, AI Research Director at Stanford’s Human-Centered AI Institute.

Early access users report that the combination of real-time web access and specialized plugins has dramatically improved GPT-5’s utility for complex research tasks, content creation, and data analysis workflows.

2️⃣ Google Rolls Out Gemini Enterprise Worldwide

Google has completed the global rollout of Gemini Enterprise, making its most advanced AI system available to organizations worldwide. This enterprise-grade offering builds upon the consumer version of Gemini with enhanced security features, expanded context windows, and specialized tools for business applications.

The worldwide deployment includes regional data processing centers in North America, Europe, Asia-Pacific, and South America to address data sovereignty requirements and reduce latency. Google has also introduced industry-specific versions of Gemini Enterprise tailored for healthcare, financial services, manufacturing, and retail sectors.

Key features of the global release include:

- Extended context window of up to 2 million tokens for processing entire document repositories

- Advanced data residency controls that comply with regional regulations

- Enterprise-grade security with SOC 2, ISO 27001, and HIPAA compliance

- Custom model fine-tuning capabilities for organization-specific knowledge

- Integration with existing Google Workspace and Google Cloud services

The global availability positions Google to compete more directly with OpenAI’s GPT offerings in the enterprise market. Analysts note that Google’s established enterprise relationships and integrated cloud ecosystem provide advantages in certain sectors.

“Gemini Enterprise represents our commitment to making advanced AI accessible to organizations of all sizes across the globe,” said Thomas Kurian, CEO of Google Cloud. “We’ve designed it to address the specific needs of enterprises while maintaining the highest standards of security and compliance.”

Early adopters include multinational corporations like Siemens, Toyota, and HSBC, which are deploying Gemini Enterprise for knowledge management, customer service automation, and internal process optimization.

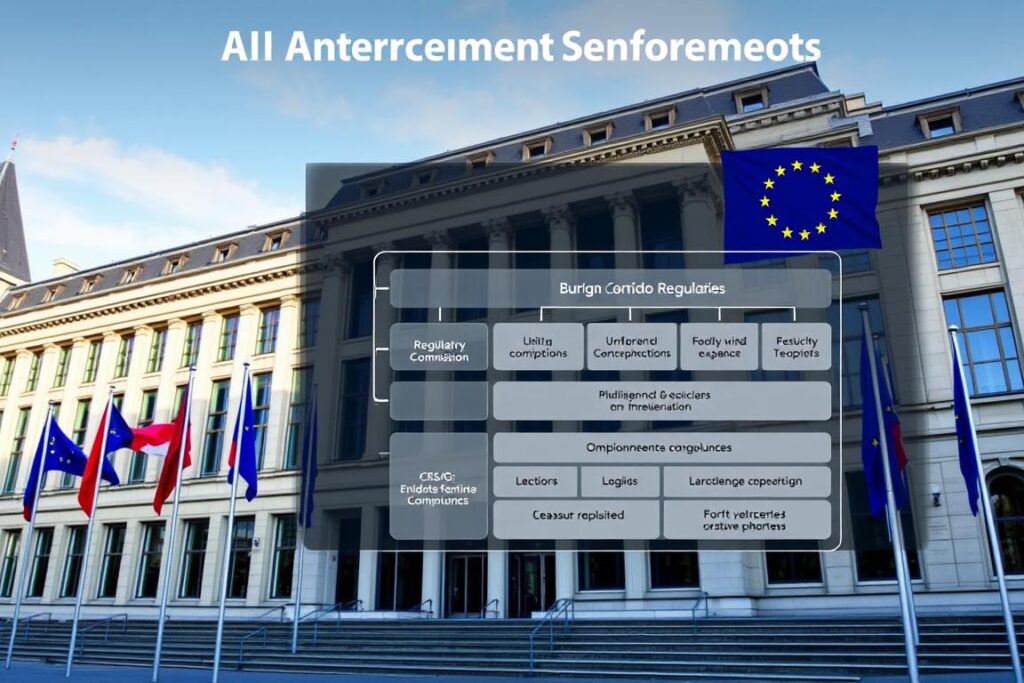

3️⃣ EU Approves Strict AI Act Enforcement Rules

The European Commission has approved the final enforcement framework for the EU AI Act, establishing concrete mechanisms for implementing what is widely considered the world’s most comprehensive AI regulation. The newly approved rules detail specific compliance requirements, enforcement procedures, and penalties for violations.

The enforcement framework introduces a tiered approach to AI oversight based on risk categories:

| Risk Category | Examples | Requirements | Enforcement Body |

| Unacceptable Risk | Social scoring, manipulative AI, real-time biometric identification in public spaces | Prohibited with limited exceptions | National AI Authorities with EU AI Office oversight |

| High Risk | Critical infrastructure, education, employment, law enforcement | Mandatory risk assessment, human oversight, documentation, transparency | National AI Authorities |

| Limited Risk | Chatbots, emotion recognition, biometric categorization | Transparency obligations, disclosure of AI use | Industry self-regulation with oversight |

| Minimal Risk | AI-enabled video games, spam filters, inventory management | Voluntary codes of conduct | Industry self-regulation |

The enforcement rules establish a central European AI Office with coordination powers across member states and introduce substantial penalties for non-compliance: up to 7% of global annual revenue for the most serious violations, 4% for high-risk violations, and 2% for providing incorrect information to authorities.

Companies now have a 12-month transition period to implement compliance measures before enforcement begins in November 2026. The framework also includes provisions for regulatory sandboxes to allow innovation while ensuring compliance.

Industry reactions have been mixed. European technology associations have praised the clarity provided by the detailed rules while expressing concerns about compliance costs. International tech companies are evaluating how these regulations will affect their global AI strategies.

4️⃣ Walmart Expands AI Inventory Automation Nationally

Walmart has announced the nationwide deployment of its AI-powered inventory management system following successful pilots in 200 stores. The expansion will bring autonomous inventory robots and predictive stocking algorithms to all 4,700+ Walmart locations across the United States by mid-2026.

The system combines physical robots that scan store shelves with advanced computer vision and machine learning algorithms that analyze inventory levels, predict demand patterns, and optimize restocking operations. The technology can detect misplaced items, identify low stock situations, and automatically generate reorder requests.

Key components of Walmart’s AI inventory system include:

In-Store Components

- Autonomous inventory robots that navigate store aisles during operating hours

- Shelf-scanning computer vision systems that identify products with 99.7% accuracy

- Electronic shelf labels that update prices dynamically based on inventory levels

- Staff mobile applications for inventory management and task prioritization

Backend Systems

- Predictive demand forecasting using historical data and external factors

- Automated replenishment systems integrated with distribution centers

- Real-time inventory visibility across the entire supply chain

- Machine learning models that continuously improve accuracy over time

Walmart reports that stores using the system have seen a 30% reduction in out-of-stock incidents, 25% less excess inventory, and a 20% increase in inventory accuracy. The company estimates the technology will save over $2 billion annually when fully deployed.

“This national rollout represents the largest deployment of retail automation technology in history,” said John Furner, President and CEO of Walmart U.S. “By combining physical robots with advanced AI, we’re creating a more efficient operation that benefits both our customers and associates.”

The company emphasized that the technology is designed to augment rather than replace human workers, with associates being retrained to work alongside the automated systems in higher-value roles.

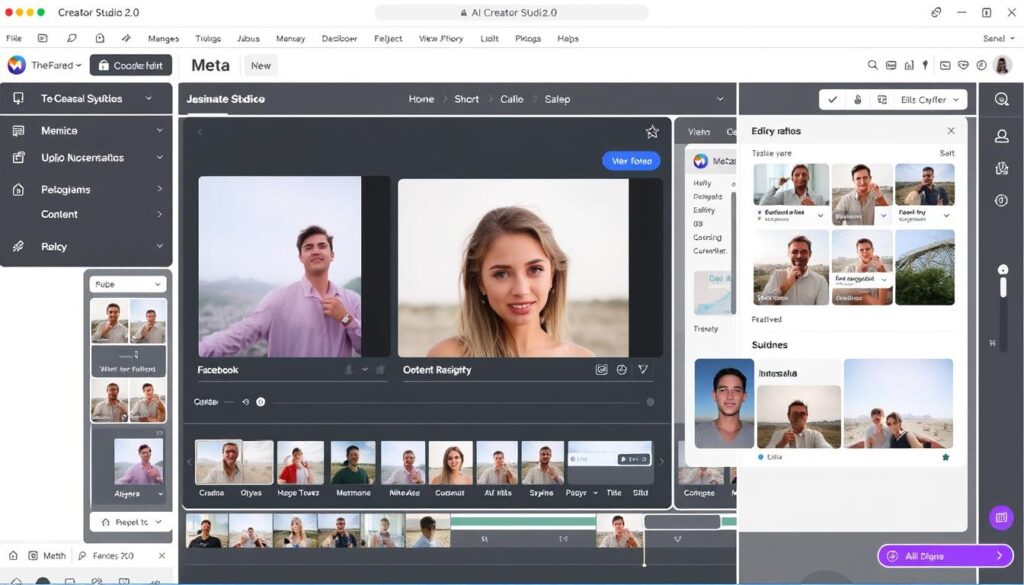

5️⃣ Meta Launches AI Creator Studio 2.0

Meta has unveiled AI Creator Studio 2.0, a comprehensive upgrade to its content creation platform designed for influencers, marketers, and content creators across its family of apps. The new version introduces advanced AI-powered tools for generating, editing, and optimizing content for Facebook, Instagram, and Threads.

The platform’s centerpiece is a suite of multimodal generative AI tools that can create and modify images, videos, and text based on natural language prompts. Creators can generate custom visuals, transform existing content, and receive AI-assisted recommendations for improving engagement.

Key Features of AI Creator Studio 2.0:

Content Generation

- Text-to-image generation with brand-specific styling

- Short-form video creation from text prompts

- AI-written captions and hashtag recommendations

- Multilingual content adaptation for global audiences

Content Enhancement

- Style transfer between images and videos

- Background removal and replacement

- Audio enhancement and voice generation

- Automatic content resizing for different platforms

Analytics & Optimization

- AI-powered performance predictions

- Audience sentiment analysis

- Optimal posting time recommendations

- A/B testing for content variations

The platform includes built-in safeguards to prevent the creation of misleading content, with automatic watermarking for AI-generated media and content authentication features. Meta has also implemented usage limits and review processes for certain types of content generation.

“AI Creator Studio 2.0 democratizes content creation by giving creators of all skill levels access to professional-grade tools,” said Tom Alison, Head of Facebook. “We’re empowering creators to express themselves in new ways while maintaining the authenticity that audiences value.”

The platform is available immediately to verified creators and business accounts, with a phased rollout to all users planned over the next three months. Meta is also launching a certification program to help creators master the new tools.

6️⃣ NVIDIA Introduces Mini Tensor-Core Edge AI Chips

NVIDIA has unveiled a new line of miniaturized tensor-core processors designed specifically for edge computing applications. The NVIDIA Edge Tensor series brings datacenter-class AI capabilities to compact devices with significant improvements in power efficiency and form factor.

The new chips, designated ET100, ET200, and ET300, are designed for deployment in IoT devices, autonomous vehicles, retail analytics systems, and industrial equipment. They enable complex AI workloads to run locally without requiring cloud connectivity, reducing latency and addressing privacy concerns.

Technical Specifications:

| Model | Tensor Cores | Performance (TOPS) | Power Consumption | Form Factor | Target Applications |

| ET100 | 64 | 20 | 1-3W | 15mm × 15mm | Smart cameras, sensors, wearables |

| ET200 | 128 | 45 | 5-10W | 25mm × 25mm | Retail analytics, industrial automation |

| ET300 | 256 | 100 | 10-15W | 30mm × 30mm | Autonomous vehicles, edge servers |

The Edge Tensor chips include specialized hardware for computer vision tasks, natural language processing, and sensor fusion. They support popular AI frameworks including TensorFlow, PyTorch, and NVIDIA’s own TensorRT, with a unified software development kit for deployment across different chip variants.

NVIDIA has partnered with leading device manufacturers including Bosch, Honeywell, and Lenovo to integrate the new chips into their product lines. The company also announced an Edge AI Marketplace where developers can access pre-trained models optimized for the Edge Tensor architecture.

“These chips represent a fundamental shift in what’s possible at the edge,” said Jensen Huang, NVIDIA’s CEO. “We’re bringing the power of tensor computing to devices that couldn’t previously support advanced AI, opening new possibilities for real-time intelligence in everyday products.”

Industry analysts note that the new chips position NVIDIA to compete more directly with Qualcomm, Intel, and specialized edge AI chip manufacturers in the rapidly growing market for edge computing hardware.

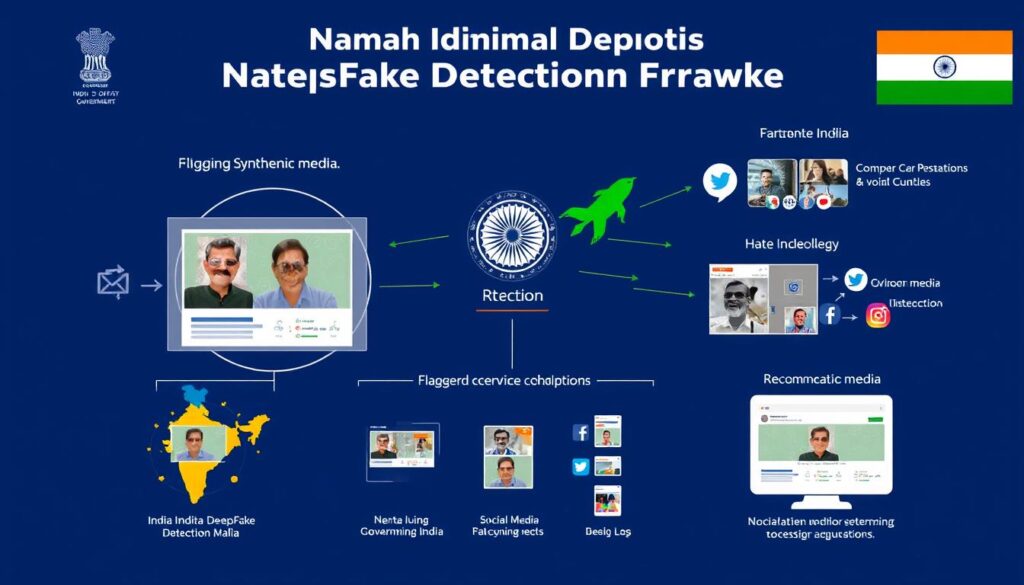

7️⃣ India Launches National Deepfake Detection Framework

The Government of India has launched a comprehensive National Deepfake Detection Framework (NDDF) to combat the growing threat of synthetic media. The initiative combines regulatory measures, technical solutions, and public awareness campaigns to identify and limit the spread of manipulated content.

The framework establishes a centralized Deepfake Detection Portal where citizens, organizations, and law enforcement can submit suspicious content for analysis. The system uses a combination of AI-based detection algorithms, digital watermarking, and content provenance tracking to identify synthetic media with reported accuracy rates of over 96%.

Key Components of India’s Deepfake Detection Framework:

- Technical Infrastructure: AI-powered detection systems deployed across government platforms and made available to private sector partners

- Regulatory Measures: New requirements for platforms to label AI-generated content and implement detection mechanisms

- Certification System: Digital certificates for authentic media from verified sources like news organizations and government agencies

- Rapid Response Team: Dedicated unit to address high-priority deepfakes during elections, emergencies, or those targeting public figures

- Public Education: Nationwide campaign to help citizens identify potential synthetic media

The initiative follows several high-profile incidents where deepfakes of Indian politicians and celebrities caused public confusion and market disruptions. The framework is being implemented in phases, with initial deployment focusing on government websites, major social media platforms, and news outlets.

“As the world’s largest democracy, India must protect its information ecosystem from synthetic manipulation,” said Ashwini Vaishnaw, Minister of Electronics and Information Technology. “This framework balances technological solutions with regulatory oversight to preserve authentic communication.”

Major technology companies including Meta, Google, and Microsoft have committed to integrating with the framework, while domestic platforms like ShareChat and JioMeet have already implemented the detection APIs. The government has also announced plans to share the framework’s technical specifications with other countries facing similar challenges.

The NDDF includes a public verification tool available at deepfakecheck.gov.in where anyone can upload content to determine if it shows signs of AI manipulation.

8️⃣ Anthropic Releases Claude 3.5 Vision Update

Anthropic has released Claude 3.5 Vision, a major update to its flagship AI assistant that significantly enhances its ability to understand and reason about visual content. The update represents a substantial improvement in multimodal capabilities, enabling Claude to analyze complex images with greater accuracy and depth.

Claude 3.5 Vision can now process high-resolution images up to 16K pixels, interpret complex diagrams, analyze charts and graphs with numerical precision, and understand spatial relationships between objects. The system demonstrates improved performance in specialized domains including medical imaging, scientific visualization, and document analysis.

Key Improvements in Claude 3.5 Vision:

Technical Capabilities

- 16K image resolution support (up from 4K)

- Simultaneous analysis of up to 20 images

- Improved OCR with 99.2% accuracy for printed text

- Enhanced fine-grained visual reasoning

- Better understanding of image sequences and relationships

Application Areas

- Medical image interpretation (X-rays, MRIs, pathology slides)

- Scientific data visualization analysis

- Complex document processing with mixed formats

- Retail inventory and product recognition

- Architectural and engineering diagram comprehension

Anthropic reports that Claude 3.5 Vision achieves state-of-the-art performance on standard visual reasoning benchmarks, including a 92.7% score on MMMU (Massive Multitask Multimodal Understanding) and 95.3% on MathVista, representing significant improvements over previous models.

The update also introduces several safety features, including enhanced detection of inappropriate image content, better recognition of attempts to circumvent content policies, and more nuanced handling of potentially sensitive visual material.

“Claude 3.5 Vision represents a fundamental advance in how AI systems understand the visual world,” said Dario Amodei, CEO of Anthropic. “We’ve focused not just on recognition, but on deep comprehension of visual information in context.”

The enhanced vision capabilities are available immediately to Claude Pro and Claude Team subscribers, with a phased rollout to Claude Free users over the coming weeks. Anthropic has also released new API endpoints for developers to integrate Claude’s vision capabilities into their applications.

9️⃣ TikTok Tests “AI Director Mode” for Creators

TikTok has begun testing “AI Director Mode,” an advanced content creation tool that uses artificial intelligence to help creators plan, shoot, and edit professional-quality videos. The feature, currently available to select creators in a closed beta, aims to simplify video production while enhancing creative possibilities.

AI Director Mode guides creators through the entire video creation process, from concept development to final editing. The system can generate storyboard suggestions based on trending formats, recommend optimal shot compositions, and automatically edit footage into cohesive narratives.

Key Features of TikTok’s AI Director Mode:

- Concept Generation: AI-suggested video concepts based on trending formats and creator’s previous successful content

- Shot Planning: Visual storyboards with composition guides and timing recommendations

- Real-time Coaching: Live feedback on framing, lighting, and performance during recording

- Automated Editing: Intelligent clip selection, pacing, and transitions based on content type

- Music & Sound Matching: AI-recommended audio options that complement visual content

- Effect Suggestions: Contextually appropriate filters and effects based on content and style

The system learns from creator preferences and audience engagement patterns to provide increasingly personalized recommendations over time. Creators maintain full control, with the ability to accept, modify, or reject AI suggestions at each stage of the process.

“AI Director Mode is like having a professional video production team in your pocket. It handles the technical aspects so creators can focus on their unique perspective and personality.”

Early testers report that the feature has helped them produce more polished content in less time, with several noting that it has introduced them to filming techniques and editing styles they wouldn’t have attempted otherwise.

TikTok plans to expand the beta program to 10,000 additional creators in December 2025, with a broader rollout expected in early 2026. The company has emphasized that AI Director Mode is designed to augment rather than replace human creativity, positioning it as a collaborative tool rather than an automated content generator.

🔟 AI Model Co-Authors a Peer-Reviewed Medical Research Paper

In a significant milestone for artificial intelligence in scientific research, an AI model has been formally credited as a co-author on a peer-reviewed paper published in the prestigious New England Journal of Medicine (NEJM). The research, focusing on novel biomarkers for early Alzheimer’s detection, represents the first instance of a major medical journal accepting an AI system as a credited contributor to original research.

The AI system, developed by researchers at Johns Hopkins University and named BioMind-7, contributed to multiple aspects of the study including hypothesis generation, experimental design optimization, data analysis, and manuscript drafting. The journal’s decision to accept the AI as a co-author came after extensive review of its specific contributions.

BioMind-7’s Contributions to the Research:

- Identified previously overlooked patterns in proteomic data suggesting new biomarker candidates

- Designed optimal experimental protocols to test the biomarker hypotheses

- Performed statistical analysis of experimental results with rigorous validation

- Generated visualizations and explanations of complex data relationships

- Drafted sections of the methodology and results for the manuscript

The human research team validated all AI contributions and maintained final editorial control over the paper. The publication includes a detailed appendix documenting the specific roles of both human and AI contributors, as well as the methodology used to verify the AI’s work.

“This represents a new paradigm in scientific collaboration,” said Dr. Sarah Chen, the study’s lead author. “BioMind-7 identified patterns in our data that we might have missed and suggested experimental approaches we hadn’t considered. It functioned as a genuine intellectual partner in the research process.”

The NEJM’s decision has sparked discussion about authorship standards in scientific publishing. The journal’s editor-in-chief, Dr. Eric Rubin, explained that the decision followed months of deliberation about how to appropriately credit AI contributions to research.

“We established specific criteria for AI co-authorship that focus on original intellectual contribution rather than tool-like assistance,” Dr. Rubin stated. “In this case, the AI system made novel contributions that would traditionally merit authorship if they came from a human collaborator.”

The research itself has significant implications for Alzheimer’s diagnosis, identifying a panel of blood-based biomarkers that could potentially detect the disease years before symptoms appear, with a reported sensitivity of 94% and specificity of 91% in preliminary validation studies.

The Accelerating Pace of AI Innovation

This week’s developments highlight the increasingly multifaceted nature of AI advancement. We’re witnessing simultaneous progress across multiple dimensions: technical capabilities are expanding with models like GPT-5 and Claude 3.5 Vision; enterprise adoption is accelerating through offerings like Gemini Enterprise; regulatory frameworks are maturing with the EU AI Act; and practical applications are proliferating from retail inventory management to creative content production.

Perhaps most significantly, we’re seeing AI transition from experimental technology to essential infrastructure across industries. The integration of AI into core business operations at Walmart, creative workflows at TikTok, and scientific research processes signals a fundamental shift in how organizations leverage artificial intelligence.

As these technologies continue to evolve, the balance between innovation, regulation, and responsible deployment will remain crucial. The coming months will likely bring further refinements to these systems, new applications across sectors, and ongoing dialogue about governance frameworks that can harness AI’s benefits while mitigating potential risks.

Stay Updated on AI Developments

Subscribe to our weekly newsletter to receive the latest AI news, analysis, and insights directly in your inbox. Join over 50,000 professionals who rely on our curated updates to stay ahead of the rapidly evolving AI landscape.

Comments are closed