The AI landscape is shifting. While massive language models with hundreds of billions of parameters dominate headlines, a quiet revolution is taking place. Small and efficient AI models are proving that sometimes, less truly is more. These compact powerhouses are transforming industries by delivering impressive performance with a fraction of the computational resources, making advanced AI accessible across diverse applications from healthcare diagnostics to edge computing and IoT devices.

In this comprehensive guide, we’ll explore why small AI models are gaining traction, examine their key advantages, showcase real-world implementations, and provide actionable insights for businesses looking to leverage this technology. Discover how these lightweight models are challenging the “bigger is better” paradigm and opening new possibilities for sustainable, accessible AI.

The Growing Importance of Small & Efficient AI Models

The AI industry has long followed a “bigger is better” approach, with models like GPT-4 and Claude 3 containing hundreds of billions of parameters. However, these massive models come with significant drawbacks: they require enormous computational resources, generate substantial carbon footprints, and remain inaccessible to many organizations due to cost and infrastructure requirements.

Small language models (SLMs) and efficient AI architectures are emerging as compelling alternatives. With parameter counts ranging from a few million to a few billion, these models deliver targeted performance while dramatically reducing resource demands. This shift is particularly crucial as AI adoption expands beyond tech giants to organizations with limited computing infrastructure.

Key Industries Embracing Small AI Models

Healthcare

In healthcare, small AI models are revolutionizing point-of-care diagnostics and patient monitoring. These models can run directly on medical devices, enabling real-time analysis without sending sensitive patient data to cloud servers. From portable ultrasound interpretation to continuous vital sign monitoring, compact models are making healthcare smarter and more accessible.

Internet of Things (IoT)

The IoT ecosystem demands intelligent processing at the edge. Small models enable smart sensors to analyze data locally, reducing latency and bandwidth requirements. Applications range from smart agriculture sensors that monitor crop health to industrial equipment with predictive maintenance capabilities—all operating on minimal power.

Edge Computing

Edge computing environments benefit tremendously from efficient AI models. By processing data locally on devices rather than sending everything to the cloud, organizations can reduce latency, enhance privacy, and operate in environments with limited connectivity. This approach is particularly valuable for remote operations, retail analytics, and smart infrastructure.

Explore Edge AI Implementation Strategies

Discover how leading organizations are deploying small AI models at the edge to transform operations and create new value streams.

Key Advantages of Small & Efficient AI Models

The benefits of small AI models extend far beyond simply requiring less storage space. These compact models offer multifaceted advantages that make them increasingly attractive for practical applications across industries.

Lower Computational Demands

Small AI models require significantly less computational power to train and run. This translates to faster inference times and the ability to operate on standard hardware rather than specialized GPU clusters. For example, models like Microsoft’s Phi-3-mini (3.8 billion parameters) can run efficiently on consumer-grade hardware while still delivering impressive performance on language tasks.

Cost Efficiency

The economic benefits of small models are substantial. Organizations can save on infrastructure costs, cloud computing expenses, and energy bills. These savings extend across the entire AI lifecycle—from initial development and training to deployment and ongoing operation. For startups and small businesses, this cost efficiency can be the difference between being able to implement AI solutions or not.

Environmental Benefits

As organizations increasingly prioritize sustainability, the environmental impact of AI systems is coming under scrutiny. Small models consume significantly less energy, reducing carbon emissions associated with both training and inference. Research indicates that training a large language model can emit as much carbon as five cars over their lifetimes, while efficient models can reduce this impact by orders of magnitude.

Enhanced Privacy and Security

By enabling on-device processing, small models allow sensitive data to remain local rather than being transmitted to cloud servers. This approach minimizes exposure to potential data breaches and helps organizations comply with privacy regulations like GDPR and HIPAA. For industries handling confidential information, such as healthcare and finance, this privacy-preserving capability is invaluable.

Deployment Flexibility

Small models can be deployed across a wider range of environments, from cloud servers to edge devices and mobile applications. This flexibility enables AI capabilities in scenarios where connectivity is limited or unreliable, such as remote industrial facilities, developing regions, or even space exploration. The ability to run offline ensures consistent performance regardless of network conditions.

Advantages of Small AI Models

- Reduced computational requirements

- Lower deployment and operational costs

- Minimized carbon footprint

- Enhanced data privacy and security

- Faster inference and response times

- Broader deployment options

- Accessibility for resource-constrained organizations

Challenges to Consider

- Potentially reduced scope of capabilities

- May require domain-specific fine-tuning

- Limited generalization across diverse tasks

- Requires careful optimization techniques

- Performance trade-offs in some complex scenarios

Real-World Examples of Small & Efficient AI Models

The landscape of small AI models is rapidly evolving, with innovative approaches delivering impressive results across various domains. Let’s examine some of the most notable examples and their practical applications.

1. TinyML: Intelligence at the Extreme Edge

TinyML represents the cutting edge of small AI model deployment, enabling deep learning on ultra-low-power microcontrollers. These specialized implementations can run on devices consuming mere milliwatts of power, opening new frontiers for embedded intelligence.

Key applications include:

- Predictive maintenance sensors that detect equipment anomalies before failures occur

- Smart agriculture monitors that analyze soil conditions and crop health in real-time

- Wearable health devices that provide continuous monitoring without frequent recharging

- Voice activation systems for IoT devices that process commands locally

According to ABI Research, approximately 2.5 billion devices with TinyML chipsets will be shipped worldwide by 2030, highlighting the explosive growth of this technology.

2. Meta’s LLaMA: Open-Source Efficiency

Meta’s LLaMA family represents a significant advancement in open-source, efficient language models. The latest iterations, including LLaMA 3.2 with 1 billion and 3 billion parameter versions, demonstrate that smaller models can deliver impressive performance when trained on high-quality data.

These models excel at:

- Text generation and summarization with minimal computational overhead

- Multilingual capabilities across dozens of languages

- Code generation and explanation for developer assistance

- On-device intelligence for mobile applications

The open-source nature of LLaMA has accelerated innovation, allowing researchers and developers to build upon and customize these models for specific applications without starting from scratch.

3. Google’s MobileBERT: Optimized for Mobile

MobileBERT exemplifies how careful architectural design can create models specifically optimized for mobile environments. This compressed version of BERT maintains 96% of its predecessor’s performance while being 4.3 times smaller and 5.5 times faster.

MobileBERT enables:

- On-device natural language understanding for mobile applications

- Real-time text analysis without cloud dependencies

- Enhanced privacy for sensitive user interactions

- Reduced battery consumption for language processing tasks

The model uses a bottleneck architecture and carefully designed knowledge distillation process to transfer capabilities from the larger BERT model while maintaining efficiency.

4. Microsoft’s Phi-3: Small But Mighty

Microsoft’s Phi-3 family, particularly the Phi-3-mini with 3.8 billion parameters, demonstrates the “small but mighty” approach to AI model development. These models achieve remarkable performance on reasoning and language understanding benchmarks, sometimes outperforming models twice their size.

Phi-3 excels in:

- Complex reasoning tasks with minimal computational resources

- Document summarization and content generation

- Powering efficient chatbots and virtual assistants

- Edge deployment scenarios requiring balanced performance and efficiency

Microsoft attributes Phi-3’s impressive capabilities to its training on exceptionally high-quality, carefully curated data rather than simply massive quantities of information.

5. IBM’s Granite: Enterprise-Ready Efficiency

IBM’s Granite series includes small language models specifically designed for enterprise applications. With 2 and 8 billion parameter versions, these models balance efficiency with the robust capabilities required for business environments.

Granite models are particularly valuable for:

- Cybersecurity applications requiring rapid threat analysis

- Enterprise knowledge management and information retrieval

- Automated customer support and service operations

- Business intelligence and data analysis

The models incorporate retrieval-augmented generation (RAG) capabilities, allowing them to access external knowledge bases for improved accuracy while maintaining computational efficiency.

Implement Small AI Models in Your Organization

Get our comprehensive implementation toolkit with frameworks, code examples, and best practices for deploying efficient AI models.

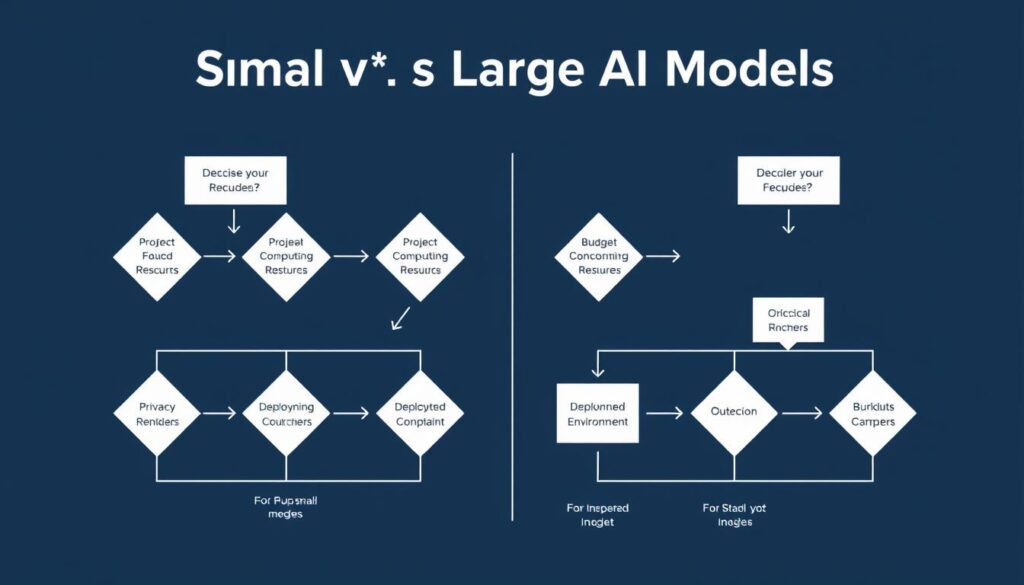

Small vs. Large AI Models: A Comparative Analysis

Understanding the differences between small and large AI models is crucial for making informed implementation decisions. While each approach has distinct advantages, the right choice depends on your specific use case, resources, and requirements.

| Characteristic | Small AI Models | Large AI Models |

| Parameter Count | Millions to a few billion | Tens to hundreds of billions |

| Energy Consumption | Low (can run on milliwatts) | High (requires significant power) |

| Training Resources | Moderate computing needs | Massive GPU clusters required |

| Inference Speed | Fast (milliseconds to seconds) | Slower (seconds to minutes) |

| Deployment Options | Edge, mobile, embedded, cloud | Primarily cloud-based |

| Task Specialization | Often domain-specific | General-purpose capabilities |

| Data Privacy | Enhanced (on-device processing) | Typically requires data transmission |

| Implementation Cost | Lower ($thousands) | Higher ($millions) |

| Primary Use Cases | Edge computing, IoT, mobile, specialized tasks | Complex reasoning, research, general AI services |

This comparison highlights that small models aren’t simply scaled-down versions of their larger counterparts—they represent a fundamentally different approach to AI implementation. Organizations should evaluate their specific needs across these dimensions when determining the most appropriate model size for their applications.

Navigating Trade-offs: Balancing Efficiency and Performance

Implementing small & efficient AI models involves careful consideration of trade-offs between computational efficiency and model performance. Understanding these trade-offs and the techniques to mitigate them is essential for successful deployment.

Common Challenges and Solutions

Challenge: Reduced Accuracy

Smaller models may experience some performance degradation compared to their larger counterparts, particularly for complex tasks requiring broad knowledge.

Solutions:

- Domain-specific fine-tuning: Tailoring models to specific use cases can significantly improve performance on targeted tasks.

- Retrieval-augmented generation (RAG): Supplementing small models with external knowledge bases can enhance their capabilities without increasing model size.

- Ensemble approaches: Combining multiple small models can achieve better performance than a single model while maintaining efficiency.

Challenge: Limited Context Windows

Small models often have shorter context windows, limiting their ability to process and understand lengthy inputs.

Solutions:

- Sliding window attention: Processing text in manageable chunks while maintaining contextual understanding.

- Hierarchical processing: Breaking down complex inputs into smaller components for efficient processing.

- Specialized architectures: Designing models specifically optimized for handling longer contexts within resource constraints.

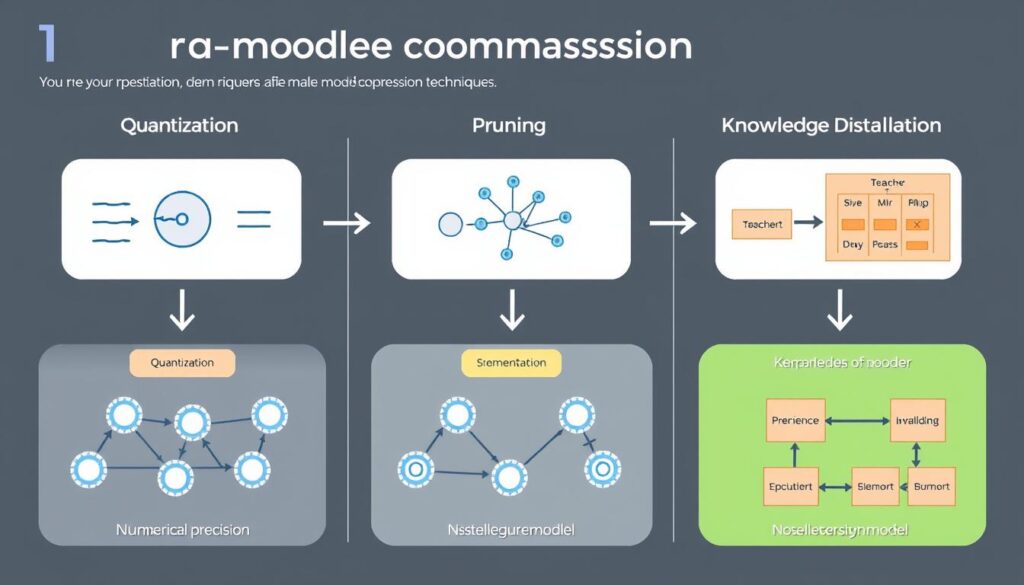

Effective Optimization Techniques

Several proven techniques can help maintain performance while reducing model size:

Quantization

Quantization reduces the precision of model weights, converting 32-bit floating-point numbers to 8-bit integers or even lower precision. This technique can reduce model size by 75% or more while maintaining most of the original performance. Post-training quantization (PTQ) applies this technique after a model is trained, while quantization-aware training (QAT) incorporates it during the training process for better results.

Pruning

Pruning removes less important connections in neural networks, essentially “trimming the fat” from overparameterized models. Research shows that many large models contain significant redundancy, and careful pruning can remove 30-90% of parameters with minimal impact on performance. Techniques include magnitude-based pruning, which removes the smallest weights, and structured pruning, which removes entire neurons or channels.

Knowledge Distillation

Knowledge distillation transfers knowledge from a larger “teacher” model to a smaller “student” model. The student learns not just to match the teacher’s final outputs but to mimic its internal representations and decision processes. This approach has proven remarkably effective, with models like DistilBERT retaining 97% of BERT’s performance while being 40% smaller and 60% faster.

Federated Learning

Federated learning enables model training across distributed devices without centralizing data. This approach is particularly valuable for small models deployed at the edge, allowing them to continuously improve based on local data while preserving privacy. The technique has shown promise in healthcare, mobile applications, and IoT environments where data sensitivity is a concern.

Master AI Model Optimization Techniques

Join our workshop series on quantization, pruning, and knowledge distillation to build more efficient AI models for your organization.

Expert Insights: The Future of Small & Efficient AI

Industry experts and researchers are increasingly recognizing the strategic importance of small and efficient AI models. Their insights provide valuable perspective on current developments and future directions in this rapidly evolving field.

“The future of machine learning is tiny. The ability to run these models on small, cheap hardware will transform how we build intelligent devices. We’re moving from a world where AI requires massive data centers to one where intelligence can be embedded everywhere.”

“Smaller models can achieve remarkable performance, challenging the notion that larger models are inherently superior in AI capabilities. The key is not just reducing size, but rethinking how we design and train these systems from the ground up.”

“By reducing energy consumption, tiny AI minimizes carbon emissions and lowers infrastructure costs, making sustainable AI solutions economically viable. This isn’t just good for the planet—it’s good business.”

Emerging Trends to Watch

Hardware Co-design

The future will see increased collaboration between hardware and AI model designers, creating specialized chips optimized for small model inference. This co-design approach promises to further enhance efficiency and performance beyond what software optimization alone can achieve.

Adaptive Intelligence

Next-generation small models will feature on-device learning capabilities, allowing them to continuously adapt to user behavior and environmental conditions. This personalization will significantly enhance performance without requiring cloud connectivity or massive model sizes.

Hybrid Approaches

Intelligent routing systems will emerge that dynamically select between small local models and larger cloud models based on task complexity, available resources, and privacy requirements. This hybrid approach offers the best of both worlds for many applications.

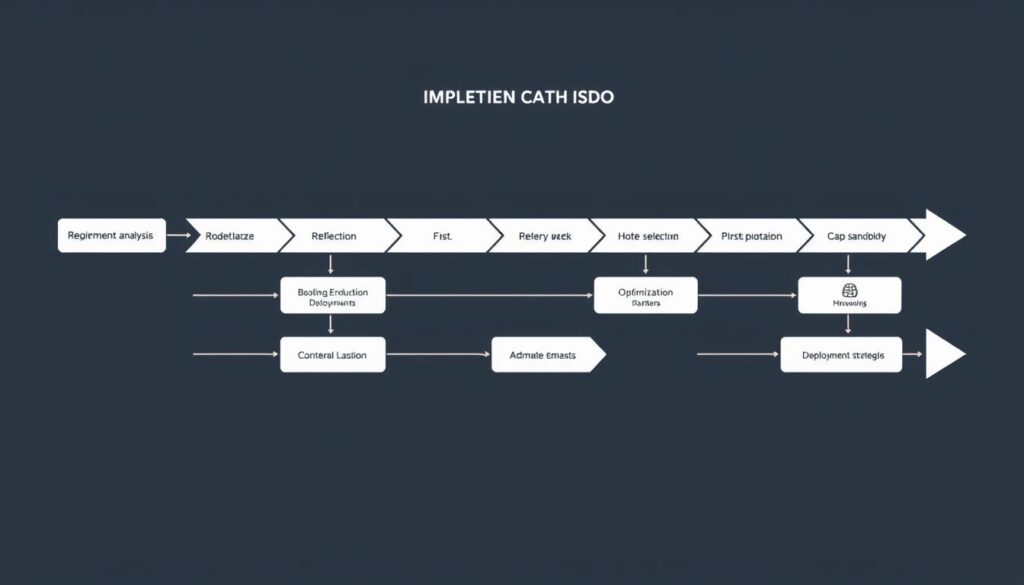

Implementing Small AI Models: Practical Considerations

For organizations looking to adopt small and efficient AI models, several practical considerations can help ensure successful implementation and maximize the benefits of this approach.

Selecting the Right Model for Your Needs

The first step is determining which small model architecture best aligns with your specific requirements. Consider these factors:

- Task specificity: Define exactly what you need the model to accomplish. More focused tasks often allow for smaller, more efficient models.

- Performance requirements: Establish minimum acceptable performance thresholds for your application.

- Deployment environment: Consider where the model will run—mobile devices, edge hardware, or server infrastructure—and the associated constraints.

- Privacy and security needs: Determine if data must remain on-device or can be processed in the cloud.

- Development resources: Assess your team’s expertise with model optimization techniques.

Integration Strategies

Successfully integrating small AI models into existing systems requires thoughtful planning:

On-Device Integration

- Optimize for the specific hardware capabilities of your target devices

- Implement efficient memory management to minimize resource usage

- Consider battery impact for mobile and IoT implementations

- Develop fallback mechanisms for when model performance is insufficient

Hybrid Cloud-Edge Approaches

- Implement intelligent routing between local and cloud models

- Develop clear criteria for when to use each processing location

- Ensure seamless user experience regardless of processing location

- Design for graceful degradation when connectivity is limited

Measuring Success

Establish clear metrics to evaluate your implementation:

- Performance metrics: Accuracy, precision, recall, F1 score, or domain-specific measures

- Efficiency metrics: Inference time, memory usage, energy consumption

- Business metrics: Cost savings, new capabilities enabled, user satisfaction

- Sustainability metrics: Carbon footprint reduction, energy efficiency improvements

Common Pitfalls to Avoid

Watch Out For:

- Overly aggressive optimization that compromises critical performance

- Insufficient testing across diverse inputs and edge cases

- Neglecting to measure real-world performance on target hardware

- Failing to establish a monitoring system for deployed models

- Not planning for model updates and maintenance

Conclusion: Embracing the Small & Efficient AI Revolution

The rise of small and efficient AI models represents a fundamental shift in how we approach artificial intelligence. As we’ve explored throughout this article, these compact models offer compelling advantages in terms of cost, accessibility, privacy, and environmental impact—often while delivering performance comparable to much larger systems for specific tasks.

The “bigger is better” paradigm that has dominated AI development is giving way to a more nuanced understanding that recognizes the value of right-sizing models for their intended applications. This approach not only makes advanced AI capabilities accessible to a broader range of organizations but also aligns with growing concerns about computational sustainability and responsible AI development.

Actionable Next Steps

For organizations looking to leverage small and efficient AI models, consider these practical next steps:

- Audit your current AI implementations to identify opportunities where small models could replace larger, resource-intensive systems.

- Experiment with open-source small models like LLaMA, Phi-3, or MobileBERT to understand their capabilities and limitations firsthand.

- Invest in knowledge and skills related to model optimization techniques such as quantization, pruning, and knowledge distillation.

- Develop a strategic roadmap for transitioning appropriate applications to more efficient AI architectures over time.

- Partner with specialists who have experience implementing small models in your specific industry or use case.

As the field continues to evolve, organizations that embrace the potential of small and efficient AI models will be well-positioned to build more sustainable, accessible, and privacy-preserving AI systems. The future of AI isn’t just about building bigger models—it’s about building smarter, more efficient ones that can be deployed wherever they’re needed most.

Start Your Small AI Implementation Journey

Get our comprehensive guide to implementing small & efficient AI models, including case studies, technical frameworks, and step-by-step implementation strategies.

Frequently Asked Questions

What exactly are small & efficient AI models?

Small & efficient AI models are machine learning models designed with significantly fewer parameters than traditional large models. They typically range from a few million to a few billion parameters, compared to hundreds of billions in large models. These compact models are optimized for specific tasks while requiring less computational power, memory, and energy to run.

Do small AI models perform as well as large models?

For many specific tasks, small AI models can perform comparably to or even better than large models. While they may not match the breadth of capabilities of massive general-purpose models, they often excel in their targeted domains. Techniques like knowledge distillation allow small models to learn from larger ones, retaining much of their performance while dramatically reducing resource requirements.

What industries benefit most from small AI models?

Industries with resource constraints, privacy concerns, or real-time processing needs benefit most from small AI models. These include healthcare (for medical devices and point-of-care diagnostics), IoT (for smart sensors and edge devices), manufacturing (for predictive maintenance), mobile applications, automotive systems, and any field requiring on-device intelligence without cloud connectivity.

How much can small AI models reduce costs?

Cost savings from small AI models can be substantial, often reducing expenses by 70-90% compared to large model implementations. These savings come from lower infrastructure requirements, reduced cloud computing costs, decreased energy consumption, and simplified deployment processes. For organizations with multiple AI applications, these savings can translate to millions of dollars annually.

What are the main techniques for creating small AI models?

The primary techniques for creating small AI models include: 1) Knowledge distillation, where a smaller model learns from a larger one; 2) Pruning, which removes less important connections in neural networks; 3) Quantization, which reduces the precision of model weights; 4) Efficient architecture design, creating models specifically optimized for size and performance; and 5) Task-specific training on high-quality data rather than massive general datasets.

Comments are closed